A couple of years ago, I was invited to attend my first AIM workshop, and it was finally held this last week. The topic was Frame theory intersects geometry, and as the name suggests, this workshop was an experiment of sorts to get two different communities together to solve various problems. Specifically, we focused on how to leverage techniques from algebraic geometry to solve certain infamous problems in frame theory, and with a number of successes. This entry overviews these successes.

— Part b of the 4M-4 conjecture is true —

Phase retrieval served as one of the main thrusts of this workshop. To help get the ball rolling on this subject, Thomas Strohmer and I gave survey talks on the subject (here are my slides). As a workshop, we quickly put our sights on the 4M-4 conjecture, which states that 4M-4 intensity measurements are necessary and generically sufficient for injectivity. I detail this conjecture in this blog post, where I also offer $100 for the solution. In that post, I indicated that part b (generic sufficiency) is probably the lower-hanging fruit. In another post, I interviewed Vlad Voroninski about his recent proof that 4 generic unitaries suffice for injectivity, and he indicated that similar proof techniques should resolve part b. This week, these suspicions proved correct, as part b was solved (or at least an extremely plausible argument was sketched and will be written up rigorously soon).

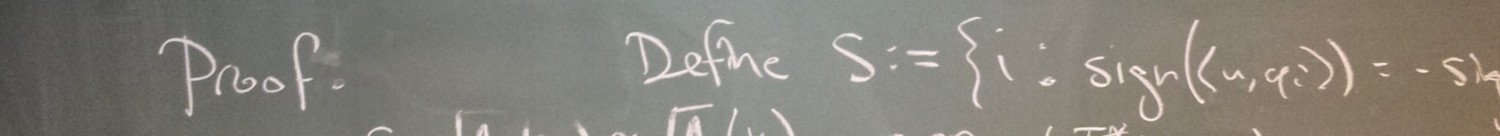

The argument is mostly due to Dan Edidin and Cynthia Vinzant, and the basic ideas used are very similar to those in Vlad’s proof for 4 unitaries. Consider the set of all complex matrices

. This set has real dimension

. The goal is to show that the set of “bad”

‘s (those which fail to yield injectivity) has strictly smaller dimension. To do this, note that

is bad precisely when there is an

matrix

of rank

and Frobenius norm 1 such that

for every

(this is Lemma 9 of this paper). As such, we lift to the set of pairs

which satisfy this relation. Counting the dimension of this lifted set, we note that the set of

‘s has

real dimensions, and for each

and each

, there is a

-dimensional set of

‘s such that

. Thus, the total dimension of bad pairs

is

. Recall that the bad set we care about is the set of

‘s for which there exists a

such that

is bad, and so we get our set by projection. Also, projections never increase the dimension of a set, and so the dimension of our set of bad

‘s is

. As such, to ensure that this dimension is less than the ambient dimension

, it suffices to have

, or equivalently

. Thus, generic

‘s with

are not bad.

This improves Dan Edidin’s previous result that generic measurements yield injectivity. I’m looking forward to reading the rigorous version of this proof. I’ll have to think about what fraction of the $100 prize this deserves (I expect the proof of part a to be very difficult in comparison).

— The set of unit norm tight frames is path-connected —

In a previous paper with me, Jameson Cahill conjectured that full spark unit norm tight frames (UNTFs) are dense in the entire set of UNTFs. To clear, unit norm tight frames are matrices such that the rows are orthogonal with squared norm

, and the columns simultaneously have unit norm, while full spark

matrices have the property that every

submatrix has nonzero determinant. As such, both of these sets are defined in terms of algebraic varieties (UNTFs form a variety, while full spark matrices form the complement of another variety), and the desired result can be proved by establishing that polynomials from the two ideals are sufficiently independent in some sense. Another proof technique is to establish that UNTFs form an irreducible variety, since this would imply that every Zariski-open subset (such as the set of full spark UNTFs) is dense. To establish irreducibility, it suffices to demonstrate that the variety is both connected and nonsingular. Unfortunately, UNTFs are frequently singular, as they lack singularities precisely when

and

are relatively prime (see this paper). But at least the proof technique has legs in this case; it remains to show that the variety is connected.

Actually, the connectivity of the variety of UNTFs has been an open problem for about a decade. It is known to not be connected when or

: For

, the variety is essentially the orthogonal group, which is disconnected according to the sign of the determinant, and for

, the set of simplices have similar issues with orientation. Are real UNTFs also disconnected when

? At the workshop, I found a definitive answer to this question with Jameson Cahill and Nate Strawn: No!

Our proof is similar in spirit to the proof that the unitary group is connected: Take any unitary matrix , and unitarily diagonalize it as

(this is possible since

normal). Since

is unitary,

has all unit-modulus entries on the diagonal, and by wiggling these entries, we can make a continuous path

of diagonal matrices such that

and

. Note that this continuous path connects

to

by

, and so any pair of unitaries can be connected by exploiting this “hub.”

In approaching the set of UNTFs, we didn’t want to apply an SVD since we didn’t know how to enforce the unit-norm-columns constraint in this decomposition. Instead, our proof leverages a sort of polar decomposition of UNTFs which is provided by the recent theory of eigensteps. To be clear, for every UNTF

, there is a corresponding

matrix of eigensteps

, whose

th column gives the

singular values (squared) of the first

columns of

. Interestingly, the norms of the columns of

are encoded in the differences between adjacent columns in

. Also, the set of

‘s which are formed by all possible UNTFs are characterized by interlacing inequalities, and so this set forms a convex polytope. Think of

as the “radial” component of a decomposition. The angular component is then a sequence of unitary matrices of different dimensions which can be used to reconstruct any UNTF from its eigensteps matrix (this is a rather technical process, but it is described in Theorem 7 of this paper). What this decomposition provides is a convenient parameterization of UNTFs — it’s convenient because the parameter spaces are sufficiently connected when

, and so we can leverage this connectivity to prove connectivity in the whole space of UNTFs (similar to how the connectivity of diagonal matrices with complex unit-modulus entries was used to prove the connectivity of

).

We’re currently writing this up, and I’ll post a link here once we put it on the arXiv.

— A new characterization of the existence of equiangular tight frames —

Equiangular tight frames (ETFs) are UNTFs with the additional constraint that the absolute values of the inner products between pairs of columns are all equal. As detailed in a previous post of mine, ETFs can be characterized as matrices with unit-norm columns

such that

for every pair . This shows that ETFs form an algebraic variety (in the real and imaginary parts of the entries of the

‘s), and the workshop attempted to leverage algebraic geometry accordingly. Specifically, we attempted to leverage Hilbert’s Nullstellensatz in a certain way: If there is an

ETF, then this forms a real point in the corresponding algebraic variety, which in turn implies the existence of a complex point in the variety, which then implies that 1 is not in the ideal generated by the variety’s polynomials. By the contrapositive, if we can express 1 as a “linear combination” of the variety’s polynomials (where the scalars in the combination can be any other polynomials), then there is no

ETF. As such, the polynomials which are used in this combination form a “certificate” of the nonexistence of

ETFs. The danger here is that 1 might not be in the ideal even though an ETF doesn’t exist, but we found hope in an example: 1 is in the ideal when

and

, meaning this approach successfully detected nonexistence. This motivated us to find patterns in the polynomials so we could construct a general certificate, thereby producing necessary conditions for existence which would rival the currently known integrality conditions. Unfortunately, this didn’t go anywhere — we stared at those polynomials for several hours, and found the pattern-recognition process to be rather frustrating.

Following the lead of Ferenc Szollosi, the group then turned its attention to another characterization, which proved to be more useful: There exists an ETF

if and only if there exists an

matrix

such that

(i)

(ii)

(iii) for every

(iv) whenever

,

and in such cases, . Note that (i)-(iv) can be expressed as polynomials in the entries of (the real and imaginary parts of)

, and so this is another formulation of ETFs as an algebraic variety. However, this formulation is a bit more interesting in the case of real ETFs: Every point in this variety is necessarily real because (iv) will only have real roots! As such, the trick of expressing 1 as a linear combination will always successfully detect nonexistence. This implies something that I find particularly interesting:

It was already known that real ETFs correspond to strongly regular graphs of certain properties. As such, a computer can easily determine whether an ETF exists after some finite amount of computation (just exhaustively search through all graphs of a certain size until it finds a strongly regular graph with the right parameters). Moreover, when it exists, the computer can print the adjacency matrix of this graph to certify the existence of an

ETF, and I can use this graph to quickly construct an ETF. But before I knew this identification using algebraic geometry, it was not clear to me how a computer could certify nonexistence. Certainly,

and

necessarily satisfy certain integrality conditions, but these necessary conditions are not sufficient, and so there could have been some pairs

for which nonexistence would be difficult to check. Now that I have this identification, I can program a computer to run Buchberger’s algorithm when a graph is not found, and then output the polynomials used in the combination that puts 1 in the ideal. Then I can “easily” verify the combination (I use quotes here because I’m assuming the degrees of the polynomials are not terribly large). Unfortunately, Buchberger’s algorithm is a doubly exponential-time algorithm, so we can’t expect it to terminate quickly in general. (To detect ETFs, it’s probably faster to exhaustively search through graphs!)

There is still hope that one can find patterns in these polynomial certificates to prove more general necessary conditions on ETF existence. Also, for the complex case (where such necessary conditions are currently absent), Cynthia Vinzant suggested that Stengle’s Positivstellensatz could be of use.

— Grassmannian line packings are necessarily frames —

In many engineering applications, there is a need for unit-norm vectors which are particularly incoherent, meaning

is small whenever

. To see how incoherent such vectors can be made, Welch proved the following bound in 1974:

.

In the previous section, we mentioned that ETFs achieve equality in this bound, and it turns out that ETFs are the only ensembles which achieve equality here (see this post). In the engineering literature, ETFs are often called Welch-bound equality sequences, accordingly. Note that by the Welch bound, ETFs are the minimizers of worst-case coherence whenever they exist, but sometimes they don’t. What can be said in these cases?

Geometrically, you should think of as the cosine of the acute angle between the lines spanned by

and

, and so what we’re actually asking for is information about optimal line packings. Since lines (and more generally,

-dimensional subspaces of

) form points in the Grassmannian

, we can alternatively think about this as a packing in a Grassmannian space. From what I’ve seen, this identification is not as helpful for proving theorems as it is useful when talking to geometers (the Grassmannian is a smooth manifold, so it tends to be a decent source of interesting examples, etc.).

Let denote the smallest possible worst-case coherence among ensembles of

unit-norm vectors in

(this is well-defined since worst-case coherence is a continuous function and the set of unit-norm ensembles is compact). Note that

, since removing any vector from an

-ensemble produces an

-ensemble of equal or better worst-case coherence. Perhaps surprisingly, this inequality is not strict in general: As established in this paper, the vertices of the icosahedron span 6 optimally packed lines in

, and the removal of any of these lines forms an optimal packing of 5 lines. Similarly, we have

, since I can embed an optimal

-dimensional packing into

dimensions. But is the inequality necessarily strict?

On the last day of the workshop, I worked on this with Felix Krahmer and Thomas Strohmer, and we established that indeed, the inequality is strict. The trick is to figure out how to perturb an embedded packing to improve it, similar to how an embedded simplex can be lifted to improve its coherence. From the perspective of frame theory, this is interesting because it implies that optimal line packings are necessarily frames: Any line packing which is not a frame is contained in a hyperplane, meaning it corresponds to a lower-dimensional line packing which has strictly suboptimal coherence. From the perspective of applications, this is useful because the known upper bounds on achievable worst-case coherence are rather poor — when ETFs don’t exist, there are sometimes deterministic constructions which do okay, and otherwise, the available random techniques produce ensembles whose coherence is a whole log factor away from the Welch bound. Conceivably, this embed-and-perturb method will lead to better theorems in these less-understood cases.

One thought on “AIM workshop: Frame theory intersects geometry”