Last year, I wrote a blog post that describes how phase retrieval applies to quantum mechanics. The main idea is that the probability distribution of outcomes of a particular observable provide intensity measurements of the (pure) state vector with the observable’s eigenstates. In other words, each observable corresponds to a unitary matrix, with which we will effectively take intensity measurements of the desired state vector. In a paper from 1978, Andrew Vogt mentioned Wright’s conjecture – that 3 observables suffice to determine the state vector up to phase. This was inadvertently disproved in 2011 by Teiko Heinosaari, Luca Mazzarella and Michael M. Wolf (see this paper), who showed that measurements are necessary for injectivity over

. The natural question is then:

Do 4 observables suffice to completely determine a pure state?

The answer (in the affirmative) appeared on the arXiv today:

Determination of all pure quantum states from a minimal number of observables

Damien Mondragon, Vladislav Voroninski

In fact, Mondragon and Voroninski show something much stronger: four generic unitary matrices lend injective intensity measurements. Having spent a considerable amount of time thinking about this sort of problem, I was very excited to see this closure to Wright’s conjecture, and I decided to interview one of the authors (Voroninski). I took the liberty of adding hyperlinks throughout:

DGM: Based on my experience, the biggest difficulty with this problem is finding a rigorous way to count the dimensions of (intersections of) manifolds. In particular, since the manifolds which arise in phase retrieval injectivity arguments are real, most of algebraic geometry doesn’t apply (e.g., the projective dimension theorem). What tools do you use to get around this difficulty, how do you use them (in the big picture), and where can I go to learn more about them?

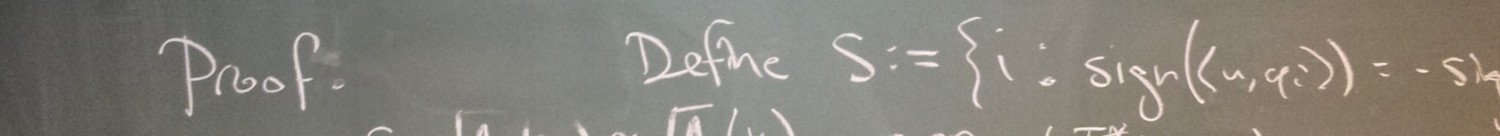

VV: Indeed, things are not as nice in algebraic geometry over the real numbers. Smooth points are not necessarily dense, which makes dimensions of algebraic sets harder to compute. We used two main tools: a resolution of singularities and the Nash stratification. There is a notion of Zariski tangent space at any point of an algebraic set, which is just the null space of the differential of the defining polynomials at that point. If smooth points (points at which the dimension of the Zariski tangent space matches the dimension of the variety) were dense, it would be enough to show that the tangent spaces have the desired dimension on a dense set. In our case, while it was doable (but messy) to compute the dimension of the Zariski tangent spaces at every point, it didn’t help in showing what the dimension of the variety at hand is. Instead, we used a resolution of singularities, which is effectively an auxiliary variety which fills in the singularities of the original, thus being a smooth variety (the dimension of each tangent space is the same) of the desired dimension. Then, by manufacturing a semialgebraic surjective map from the auxiliary to the original, we were able to claim that the original has at most the desired dimension (which was enough for our purposes), since semialgebraic maps cannot increase dimension.

The above technique upper-bounds the algebraic dimension of the varieties at hand, but our argument relies heavily on differential geometry, so at the end of the day we needed to work with smooth objects. While an algebraic set doesn’t have to be a manifold, or even smooth, it has to be a union of Nash manifolds, which constitutes the Nash stratification of an algebraic set. Using this stratification, we expressed the “bad” set of non-injective mod phase maps in question as a union of submanifolds. Each such submanifold was constructed to be invariant under a particular subgroup of the unitary group and thus we could factor through the quotient given by the action of this subgroup on the way to the total quotient. We picked that subgroup so that the space through which we factor through has a smaller dimension than the final image space, allowing us to invoke Sard’s theorem to claim that the image under the global quotient of the “bad” set has measure zero.

We used a very nice text, called “Real Algebraic Geometry”, by Jacek Bochnak, Michel Coste and Marie-Francoise Roy, which details the tools of real algebraic geometry.

DGM: Do you think similar ideas can be used to solve the 4M-4 conjecture? Or at least part (b)?

VV: For part (b), definitely. We actually gave part (b) a couple days of thought using our methods and we are quite close to this (of course, as usual, the last 10% always takes the most time). We’re currently working out the details and hope to write a proof of part (b) of the 4n-4 conjecture into a later version of this paper. Part (a), on the other hand, is a mystery to me at the moment and I don’t see a straight-forward way of doing it using these methods.

DGM: Do your methods suggest a routine to test whether a given collection of unitary matrices lends injective intensity measurements? If not, does this problem seem to be within reach?

VV: The methods make statements about generic matrices, so I don’t think it’s possible to translate that into a property of a particular matrix. Even in trying to make injectivity statements about more structured, yet still not deterministic, measurement ensembles, like , one runs into trouble with using these methods because they rely heavily on symmetry. Does the problem seem within reach? I think it’s a pretty hard problem. In essence, it’s asking about when a particular quadratic map from

into

is an embedding and while it may be possible to check that such a map is an immersion, ruling out self-intersections is always a difficult thing to do. It seems that there will have to be a fair amount of algebraic structure involved in particular measurements which are injective.

DGM: In the acknowledgments, you say “A special thanks goes out to Y Combinator, for providing an entirely orthogonal effort, during which the ideas for this paper were conceived as a hedge.” What is this all about?

VV: It was two days before I was due to interview at YCombinator (a startup accelerator in Silicon Valley). I had been preparing for about a week straight, so all I had been thinking about was a business plan for a computer vision company and as a result, was jonesing to do some math. So I decided to hedge my bets (correctly on both accounts as it turned out!) and spend a day perusing some papers, something I rarely do. I was reading “Saving phase: Injectivity and stability in phase retrieval” when I had an idea for the first part of the approach and spent the rest of the day consumed by it. The next month was dedicated to working with my co-author Damien Mondragon on completing this paper. So, thanks YC!

One thought on “Determination of all pure quantum states from a minimal number of observables”