I recently finished writing the paper based on a talk I gave at MATHEON at TU Berlin last year. (The talk was very fun to give — halfway through, it featured a game show in which Felix Krahmer won a bottle of Tabasco.) This work was a collaboration with Afonso Bandeira, Matt Fickus and Joel Moreira, and in the original version (presented in my talk), we mixed two ingredients to derandomize Bernoulli RIP matrices:

(i) a strong version of the Chowla conjecture, and

(ii) the flat RIP trick from this paper.

The Chowla conjecture essentially states that as gets large, if you randomly draw

from

, then consecutive entries of the Liouville function

are asymptotically independent. The strong version of the Chowla conjecture that we used in (i) not only gave asymptotic independence, but also provided a rate of convergence. The intuition is that consecutive Liouville function entries behave very similarly to iid Bernoulli

‘s, and so we should expect that populating a matrix with these entries will yield RIP. Indeed, we used flat RIP to demonstrate random-like cancellations that prove RIP. Unfortunately, the strong version of the Chowla conjecture that we used implies the Chowla conjecture, which has been open for almost 50 years.

A couple of months ago, Peter Sarnak pointed out a way around this difficulty. (No, he didn’t prove the Chowla conjecture.) It turns out that consecutive Legendre symbols exhibit asymptotic independence with the exact convergence rate that our proof requires. (!) This was originally proved by Harold Davenport, and the version of the result that we use is Theorem 1 in this paper. This changes our construction slightly (just replace Liouville with Legendre) and produces a main result which is no longer dependent on a conjecture:

Theorem. Given ,

and

, take

with and

sufficiently large, and let

denote any prime

. Draw

uniformly from

, and define the corresponding

matrix

entrywise by the Legendre symbol

Then satisfies the

-restricted isometry property with high probability.

In the small- regime, specifically

, this is the most derandomized RIP matrix to date with

entries and

. In the large-

regime (

), the record goes to a Johnson–Lindenstrauss alternative that leverages

-wise independent entries for some

(this is a consequence of Theorem 2.2 in this paper). The most derandomized RIP matrix without the

entry constraint comes from an iterative application of this JL alternative (see this paper). For all three of these constructions, the number of random bits used is

; in fact, two log factors appear in the entropy of each construction, the only difference being the type of log factors that appear.

Interestingly, JL projections necessarily require at least a certain number of random bits in order to nearly preserve the length of every vector with high probability. As such, unlike RIP matrices, JL projections can only be derandomized so much, and the derandomized JL projections mentioned above are within log factors of optimal. This leads to the following problem:

Problem (Breaking the Johnson–Lindenstrauss bottleneck). Find a construction of matrices which satisfies the

-restricted isometry property with high probability whenever

and uses only

random bits.

Curiously, our Legendre symbol–based construction does not break the JL bottleneck.

Note that our main result didn’t use anything particularly special about the Legendre symbol (except its pseudo-randomness properties). In fact, as we show in the paper, our main result has a more general version which explicitly uses the convergence rate of asymptotically independent Bernoulli random variables. Such random variables are known in the literature under the buzzword small-bias sample space, and several constructions of small-bias sample spaces exist to date. However, as we show in the paper, no small-bias sample space can be used to break the JL bottleneck with our techniques (!), and of the constructions that currently exist, only the Legendre construction naturally leads to a fully derandomized RIP matrix conjecture:

Conjecture. There exists a universal constant such that for every

, there exists

and

such that for every

satisfying

and for every prime , the

matrix

defined entrywise by the Legendre symbol

satisfies the -restricted isometry property.

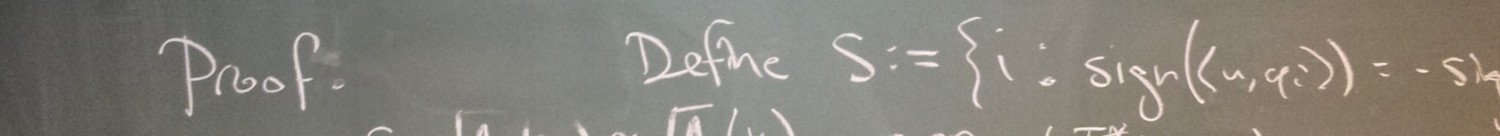

One might apply flat RIP to approach this conjecture, and interestingly, the conjecture statement essentially becomes a bound on incomplete sums of Legendre symbols, not too different those considered in this paper. Obviously, we had difficulty proving these cancellations, and so we resorted to injecting randomness into our construction.

One thought on “Derandomizing restricted isometries via the Legendre symbol”